Big Data Applications are an important topic that have impact in academia and industry.

This the multi-page printable view of this section. Click here to print.

2019

- 1: Introduction

- 2: Introduction (Fall 2018)

- 3: Motivation

- 4: Motivation (cont.)

- 5: Cloud

- 6: Physics

- 7: Deep Learning

- 8: Sports

- 9: Deep Learning (Cont. I)

- 10: Deep Learning (Cont. II)

- 11: Introduction to Deep Learning (III)

- 12: Cloud Computing

- 13: Introduction to Cloud Computing

- 14: Assignments

- 14.1: Assignment 1

- 14.2: Assignment 2

- 14.3: Assignment 3

- 14.4: Assignment 4

- 14.5: Assignment 5

- 14.6: Assignment 6

- 14.7: Assignment 7

- 14.8: Assignment 8

- 15: Applications

- 15.1: Big Data Use Cases Survey

- 15.2: Cloud Computing

- 15.3: e-Commerce and LifeStyle

- 15.4: Health Informatics

- 15.5: Overview of Data Science

- 15.6: Physics

- 15.7: Plotviz

- 15.8: Practical K-Means, Map Reduce, and Page Rank for Big Data Applications and Analytics

- 15.9: Radar

- 15.10: Sensors

- 15.11: Sports

- 15.12: Statistics

- 15.13: Web Search and Text Mining

- 15.14: WebPlotViz

- 16: Technologies

1 - Introduction

Introduction to the Course

created from https://drive.google.com/drive/folders/0B1YZSKYkpykjbnE5QzRldGxja3M

2 - Introduction (Fall 2018)

Introduction to Big Data Applications

This is an overview course of Big Data Applications covering a broad range of problems and solutions. It covers cloud computing technologies and includes a project. Also, algorithms are introduced and illustrated.

General Remarks Including Hype cycles

This is Part 1 of the introduction. We start with some general remarks and take a closer look at the emerging technology hype cycles.

1.a Gartner’s Hypecycles and especially those for emerging technologies between 2016 and 2018

1.b Gartner’s Hypecycles with Emerging technologies hypecycles and the priority matrix at selected times 2008-2015

1.a + 1.b:

- Technology trends

- Industry reports

Data Deluge

This is Part 2 of the introduction.

2.a Business usage patterns from NIST

2.b Cyberinfrastructure and AI

2.a + 2.b

- Several examples of rapid data and information growth in different areas

- Value of data and analytics

Jobs

This is Part 3 of the introduction.

- Jobs opportunities in the areas: data science, clouds and computer science and computer engineering

- Jobs demands in different countries and companies.

- Trends and forecast of jobs demands in the future.

Industry Trends

This is Part 4 of the introduction.

4a. Industry Trends: Technology Trends by 2014

4b. Industry Trends: 2015 onwards

An older set of trend slides is available from:

4a. Industry Trends: Technology Trends by 2014

A current set is available at:

4b. Industry Trends: 2015 onwards

4c. Industry Trends: Voice and HCI, cars,Deep learning

- Many technology trends through end of 2014 and 2015 onwards, examples in different fields

- Voice and HCI, Cars Evolving and Deep learning

Digital Disruption and Transformation

This is Part 5 of the introduction.

- Digital Disruption and Transformation

- The past displaced by digital disruption

Computing Model

This is Part 6 of the introduction.

6a. Computing Model: earlier discussion by 2014:

6b. Computing Model: developments after 2014 including Blockchain:

- Industry adopted clouds which are attractive for data analytics, including big companies, examples are Google, Amazon, Microsoft and so on.

- Some examples of development: AWS quarterly revenue, critical capabilities public cloud infrastructure as a service.

- Blockchain: ledgers redone, blockchain consortia.

Research Model

This is Part 7 of the introduction.

Research Model: 4th Paradigm; From Theory to Data driven science?

- The 4 paradigm of scientific research: Theory,Experiment and observation,Simulation of theory or model,Data-driven.

Data Science Pipeline

This is Part 8 of the introduction. 8. Data Science Pipeline

- DIKW process:Data, Information, Knowledge, Wisdom and Decision.

- Example of Google Maps/navigation.

- Criteria for Data Science platform.

Physics as an Application Example

This is Part 9 of the introduction.

- Physics as an application example.

Technology Example

This is Part 10 of the introduction.

- Overview of many informatics areas, recommender systems in detail.

- NETFLIX on personalization, recommendation, datascience.

Exploring Data Bags and Spaces

This is Part 11 of the introduction.

- Exploring data bags and spaces: Recommender Systems II

- Distances in funny spaces, about “real” spaces and how to use distances.

Another Example: Web Search Information Retrieval

This is Part 12 of the introduction. 12. Another Example: Web Search Information Retrieval

Cloud Application in Research

This is Part 13 of the introduction discussing cloud applications in research.

- Cloud Applications in Research: Science Clouds and Internet of Things

Software Ecosystems: Parallel Computing and MapReduce

This is Part 14 of the introduction discussing the software ecosystem

- Software Ecosystems: Parallel Computing and MapReduce

Conclusions

This is Part 15 of the introduction with some concluding remarks. 15. Conclusions

3 - Motivation

Part I Motivation I

Motivation

Big Data Applications & Analytics: Motivation/Overview; Machine (actually Deep) Learning, Big Data, and the Cloud; Centerpieces of the Current and Future Economy,

00) Mechanics of Course, Summary, and overall remarks on course

In this section we discuss the summary of the motivation section.

01A) Technology Hypecycle I

Today clouds and big data have got through the hype cycle (they have emerged) but features like blockchain, serverless and machine learning are on recent hype cycles while areas like deep learning have several entries (as in fact do clouds) Gartner’s Hypecycles and especially that for emerging technologies in 2019 The phases of hypecycles Priority Matrix with benefits and adoption time Initial discussion of 2019 Hypecycle for Emerging Technologies

01B) Technology Hypecycle II

Today clouds and big data have got through the hype cycle (they have emerged) but features like blockchain, serverless and machine learning are on recent hype cycles while areas like deep learning have several entries (as in fact do clouds) Gartner’s Hypecycles and especially that for emerging technologies in 2019 Details of 2019 Emerging Technology and related (AI, Cloud) Hypecycles

01C) Technology Hypecycle III

Today clouds and big data have got through the hype cycle (they have emerged) but features like blockchain, serverless and machine learning are on recent hype cycles while areas like deep learning have several entries (as in fact do clouds) Gartners Hypecycles and Priority Matrices for emerging technologies in 2018, 2017 and 2016 More details on 2018 will be found in Unit 1A of 2018 Presentation and details of 2015 in Unit 1B (Journey to Digital Business). 1A in 2018 also discusses 2017 Data Center Infrastructure removed as this hype cycle disappeared in later years.

01D) Technology Hypecycle IV

Today clouds and big data have got through the hype cycle (they have emerged) but features like blockchain, serverless and machine learning are on recent hype cycles while areas like deep learning have several entries (as in fact do clouds) Emerging Technologies hypecycles and Priority matrix at selected times 2008-2015 Clouds star from 2008 to today They are mixed up with transformational and disruptive changes Unit 1B of 2018 Presentation has more details of this history including Priority matrices

02)

02A) Clouds/Big Data Applications I

The Data Deluge Big Data; a lot of the best examples have NOT been updated (as I can’t find updates) so some slides old but still make the correct points Big Data Deluge has become the Deep Learning Deluge Big Data is an agreed fact; Deep Learning still evolving fast but has stream of successes!

02B) Cloud/Big Data Applications II

Clouds in science where area called cyberinfrastructure; The usage pattern from NIST is removed. See 2018 lectures 2B of the motivation for this discussion

02C) Cloud/Big Data

Usage Trends Google and related Trends Artificial Intelligence from Microsoft, Gartner and Meeker

03) Jobs In areas like Data Science, Clouds and Computer Science and Computer

Engineering

04) Industry, Technology, Consumer Trends Basic trends 2018 Lectures 4A 4B have

more details removed as dated but still valid See 2018 Lesson 4C for 3 Technology trends for 2016: Voice as HCI, Cars, Deep Learning

05) Digital Disruption and Transformation The Past displaced by Digital

Disruption; some more details are in 2018 Presentation Lesson 5

06)

06A) Computing Model I Industry adopted clouds which are attractive for data

analytics. Clouds are a dominant force in Industry. Examples are given

06B) Computing Model II with 3 subsections is removed; please see 2018

Presentation for this Developments after 2014 mainly from Gartner Cloud Market share Blockchain

07) Research Model 4th Paradigm; From Theory to Data driven science?

08) Data Science Pipeline DIKW: Data, Information, Knowledge, Wisdom, Decisions.

More details on Data Science Platforms are in 2018 Lesson 8 presentation

09) Physics: Looking for Higgs Particle with Large Hadron Collider LHC Physics as a big data example

10) Recommender Systems I General remarks and Netflix example

11) Recommender Systems II Exploring Data Bags and Spaces

12) Web Search and Information Retrieval Another Big Data Example

13) Cloud Applications in Research Removed Science Clouds, Internet of Things

Part 12 continuation. See 2018 Presentation (same as 2017 for lesson 13) and Cloud Unit 2019-I) this year

14) Parallel Computing and MapReduce Software Ecosystems

15) Online education and data science education Removed.

You can find it in the 2017 version. In @sec:534-week2 you can see more about this.

16) Conclusions

Conclusion contain in the latter part of the part 15.

Motivation Archive Big Data Applications and Analytics: Motivation/Overview; Machine (actually Deep) Learning, Big Data, and the Cloud; Centerpieces of the Current and Future Economy. Backup Lectures from previous years referenced in 2019 class

4 - Motivation (cont.)

Part II Motivation Archive

2018 BDAA Motivation-1A) Technology Hypecycle I

In this section we discuss on general remarks including Hype curves.

2018 BDAA Motivation-1B) Technology Hypecycle II

In this section we continue our discussion on general remarks including Hype curves.

2018 BDAA Motivation-2B) Cloud/Big Data Applications II

In this section we discuss clouds in science where area called cyberinfrastructure; the usage pattern from NIST Artificial Intelligence from Gartner and Meeker.

2018 BDAA Motivation-4A) Industry Trends I

In this section we discuss on Lesson 4A many technology trends through end of 2014.

2018 BDAA Motivation-4B) Industry Trends II

In this section we continue our discussion on industry trends. This section includes Lesson 4B 2015 onwards many technology adoption trends.

2017 BDAA Motivation-4C)Industry Trends III

In this section we continue our discussion on industry trends. This section contains lesson 4C 2015 onwards 3 technology trends voice as HCI cars deep learning.

2018 BDAA Motivation-6B) Computing Model II

In this section we discuss computing models. This section contains lesson 6B with 3 subsections developments after 2014 mainly from Gartner cloud market share blockchain

2017 BDAA Motivation-8) Data Science Pipeline DIKW

In this section, we discuss data science pipelines. This section also contains about data, information, knowledge, wisdom forming DIKW term. And also it contains some discussion on data science platforms.

2017 BDAA Motivation-13) Cloud Applications in Research Science Clouds Internet of Things

In this section we discuss about internet of things and related cloud applications.

2017 BDAA Motivation-15) Data Science Education Opportunities at Universities

In this section we discuss more on data science education opportunities.

5 - Cloud

Part III Cloud {#sec:534-week3}

A. Summary of Course

B. Defining Clouds I

In this lecture we discuss the basic definition of cloud and two very simple examples of why virtualization is important.

In this lecture we discuss how clouds are situated wrt HPC and supercomputers, why multicore chips are important in a typical data center.

C. Defining Clouds II

In this lecture we discuss service-oriented architectures, Software services as Message-linked computing capabilities.

In this lecture we discuss different aaS’s: Network, Infrastructure, Platform, Software. The amazing services that Amazon AWS and Microsoft Azure have Initial Gartner comments on clouds (they are now the norm) and evolution of servers; serverless and microservices Gartner hypecycle and priority matrix on Infrastructure Strategies.

D. Defining Clouds III: Cloud Market Share

In this lecture we discuss on how important the cloud market shares are and how much money do they make.

E. Virtualization: Virtualization Technologies,

In this lecture we discuss hypervisors and the different approaches KVM, Xen, Docker and Openstack.

F. Cloud Infrastructure I

In this lecture we comment on trends in the data center and its technologies. Clouds physically spread across the world Green computing Fraction of world’s computing ecosystem. In clouds and associated sizes an analysis from Cisco of size of cloud computing is discussed in this lecture.

G. Cloud Infrastructure II

In this lecture, we discuss Gartner hypecycle and priority matrix on Compute Infrastructure Containers compared to virtual machines The emergence of artificial intelligence as a dominant force.

H. Cloud Software:

In this lecture we discuss, HPC-ABDS with over 350 software packages and how to use each of 21 layers Google’s software innovations MapReduce in pictures Cloud and HPC software stacks compared Components need to support cloud/distributed system programming.

I. Cloud Applications I: Clouds in science where area called

In this lecture we discuss cyberinfrastructure; the science usage pattern from NIST Artificial Intelligence from Gartner.

J. Cloud Applications II: Characterize Applications using NIST

In this lecture we discuss the approach Internet of Things with different types of MapReduce.

K. Parallel Computing

In this lecture we discuss analogies, parallel computing in pictures and some useful analogies and principles.

L. Real Parallel Computing: Single Program/Instruction Multiple Data SIMD SPMD

In this lecture, we discuss Big Data and Simulations compared and we furthermore discusses what is hard to do.

M. Storage: Cloud data

In this lecture we discuss about the approaches, repositories, file systems, data lakes.

N. HPC and Clouds

In this lecture we discuss the Branscomb Pyramid Supercomputers versus clouds Science Computing Environments.

O. Comparison of Data Analytics with Simulation:

In this lecture we discuss the structure of different applications for simulations and Big Data Software implications Languages.

P. The Future I

In this lecture we discuss Gartner cloud computing hypecycle and priority matrix 2017 and 2019 Hyperscale computing Serverless and FaaS Cloud Native Microservices Update to 2019 Hypecycle.

Q. other Issues II

In this lecture we discuss on Security Blockchain.

R. The Future and other Issues III

In this lecture we discuss on Fault Tolerance.

6 - Physics

Physics with Big Data Applications {#sec:534-week5}

E534 2019 Big Data Applications and Analytics Discovery of Higgs Boson Part I (Unit 8) Section Units 9-11 Summary: This section starts by describing the LHC accelerator at CERN and evidence found by the experiments suggesting existence of a Higgs Boson. The huge number of authors on a paper, remarks on histograms and Feynman diagrams is followed by an accelerator picture gallery. The next unit is devoted to Python experiments looking at histograms of Higgs Boson production with various forms of shape of signal and various background and with various event totals. Then random variables and some simple principles of statistics are introduced with explanation as to why they are relevant to Physics counting experiments. The unit introduces Gaussian (normal) distributions and explains why they seen so often in natural phenomena. Several Python illustrations are given. Random Numbers with their Generators and Seeds lead to a discussion of Binomial and Poisson Distribution. Monte-Carlo and accept-reject methods. The Central Limit Theorem concludes discussion.

Unit 8:

8.1 - Looking for Higgs: 1. Particle and Counting Introduction 1

We return to particle case with slides used in introduction and stress that particles often manifested as bumps in histograms and those bumps need to be large enough to stand out from background in a statistically significant fashion.

8.2 - Looking for Higgs: 2. Particle and Counting Introduction 2

We give a few details on one LHC experiment ATLAS. Experimental physics papers have a staggering number of authors and quite big budgets. Feynman diagrams describe processes in a fundamental fashion.

8.3 - Looking for Higgs: 3. Particle Experiments

We give a few details on one LHC experiment ATLAS. Experimental physics papers have a staggering number of authors and quite big budgets. Feynman diagrams describe processes in a fundamental fashion

8.4 - Looking for Higgs: 4. Accelerator Picture Gallery of Big Science

This lesson gives a small picture gallery of accelerators. Accelerators, detection chambers and magnets in tunnels and a large underground laboratory used fpr experiments where you need to be shielded from background like cosmic rays.

Unit 9

This unit is devoted to Python experiments with Geoffrey looking at histograms of Higgs Boson production with various forms of shape of signal and various background and with various event totals

9.1 - Looking for Higgs II: 1: Class Software

We discuss how this unit uses Java (deprecated) and Python on both a backend server (FutureGrid - closed!) or a local client. We point out useful book on Python for data analysis. This lesson is deprecated. Follow current technology for class

9.2 - Looking for Higgs II: 2: Event Counting

We define ‘‘event counting’’ data collection environments. We discuss the python and Java code to generate events according to a particular scenario (the important idea of Monte Carlo data). Here a sloping background plus either a Higgs particle generated similarly to LHC observation or one observed with better resolution (smaller measurement error).

9.3 - Looking for Higgs II: 3: With Python examples of Signal plus Background

This uses Monte Carlo data both to generate data like the experimental observations and explore effect of changing amount of data and changing measurement resolution for Higgs.

9.4 - Looking for Higgs II: 4: Change shape of background & number of Higgs Particles

This lesson continues the examination of Monte Carlo data looking at effect of change in number of Higgs particles produced and in change in shape of background

Unit 10

In this unit we discuss;

E534 2019 Big Data Applications and Analytics Discovery of Higgs Boson: Big Data Higgs Unit 10: Looking for Higgs Particles Part III: Random Variables, Physics and Normal Distributions Section Units 9-11 Summary: This section starts by describing the LHC accelerator at CERN and evidence found by the experiments suggesting existence of a Higgs Boson. The huge number of authors on a paper, remarks on histograms and Feynman diagrams is followed by an accelerator picture gallery. The next unit is devoted to Python experiments looking at histograms of Higgs Boson production with various forms of shape of signal and various background and with various event totals. Then random variables and some simple principles of statistics are introduced with explanation as to why they are relevant to Physics counting experiments. The unit introduces Gaussian (normal) distributions and explains why they seen so often in natural phenomena. Several Python illustrations are given. Random Numbers with their Generators and Seeds lead to a discussion of Binomial and Poisson Distribution. Monte-Carlo and accept-reject methods. The Central Limit Theorem concludes discussion. Big Data Higgs Unit 10: Looking for Higgs Particles Part III: Random Variables, Physics and Normal Distributions Overview: Geoffrey introduces random variables and some simple principles of statistics and explains why they are relevant to Physics counting experiments. The unit introduces Gaussian (normal) distributions and explains why they seen so often in natural phenomena. Several Python illustrations are given. Java is currently not available in this unit.

10.1 - Statistics Overview and Fundamental Idea: Random Variables

We go through the many different areas of statistics covered in the Physics unit. We define the statistics concept of a random variable.

10.2 - Physics and Random Variables I

We describe the DIKW pipeline for the analysis of this type of physics experiment and go through details of analysis pipeline for the LHC ATLAS experiment. We give examples of event displays showing the final state particles seen in a few events. We illustrate how physicists decide whats going on with a plot of expected Higgs production experimental cross sections (probabilities) for signal and background.

10.3 - Physics and Random Variables II

We describe the DIKW pipeline for the analysis of this type of physics experiment and go through details of analysis pipeline for the LHC ATLAS experiment. We give examples of event displays showing the final state particles seen in a few events. We illustrate how physicists decide whats going on with a plot of expected Higgs production experimental cross sections (probabilities) for signal and background.

10.4 - Statistics of Events with Normal Distributions

We introduce Poisson and Binomial distributions and define independent identically distributed (IID) random variables. We give the law of large numbers defining the errors in counting and leading to Gaussian distributions for many things. We demonstrate this in Python experiments.

10.5 - Gaussian Distributions

We introduce the Gaussian distribution and give Python examples of the fluctuations in counting Gaussian distributions.

10.6 - Using Statistics

We discuss the significance of a standard deviation and role of biases and insufficient statistics with a Python example in getting incorrect answers.

Unit 11

In this section we discuss;

E534 2019 Big Data Applications and Analytics Discovery of Higgs Boson: Big Data Higgs Unit 11: Looking for Higgs Particles Part IV: Random Numbers, Distributions and Central Limit Theorem Section Units 9-11 Summary: This section starts by describing the LHC accelerator at CERN and evidence found by the experiments suggesting existence of a Higgs Boson. The huge number of authors on a paper, remarks on histograms and Feynman diagrams is followed by an accelerator picture gallery. The next unit is devoted to Python experiments looking at histograms of Higgs Boson production with various forms of shape of signal and various background and with various event totals. Then random variables and some simple principles of statistics are introduced with explanation as to why they are relevant to Physics counting experiments. The unit introduces Gaussian (normal) distributions and explains why they seen so often in natural phenomena. Several Python illustrations are given. Random Numbers with their Generators and Seeds lead to a discussion of Binomial and Poisson Distribution. Monte-Carlo and accept-reject methods. The Central Limit Theorem concludes discussion. Big Data Higgs Unit 11: Looking for Higgs Particles Part IV: Random Numbers, Distributions and Central Limit Theorem Unit Overview: Geoffrey discusses Random Numbers with their Generators and Seeds. It introduces Binomial and Poisson Distribution. Monte-Carlo and accept-reject methods are discussed. The Central Limit Theorem and Bayes law concludes discussion. Python and Java (for student - not reviewed in class) examples and Physics applications are given.

11.1 - Generators and Seeds I

We define random numbers and describe how to generate them on the computer giving Python examples. We define the seed used to define to specify how to start generation.

11.2 - Generators and Seeds II

We define random numbers and describe how to generate them on the computer giving Python examples. We define the seed used to define to specify how to start generation.

11.3 - Binomial Distribution

We define binomial distribution and give LHC data as an eaxmple of where this distribution valid.

11.4 - Accept-Reject

We introduce an advanced method – accept/reject – for generating random variables with arbitrary distrubitions.

11.5 - Monte Carlo Method

We define Monte Carlo method which usually uses accept/reject method in typical case for distribution.

11.6 - Poisson Distribution

We extend the Binomial to the Poisson distribution and give a set of amusing examples from Wikipedia.

11.7 - Central Limit Theorem

We introduce Central Limit Theorem and give examples from Wikipedia.

11.8 - Interpretation of Probability: Bayes v. Frequency

This lesson describes difference between Bayes and frequency views of probability. Bayes’s law of conditional probability is derived and applied to Higgs example to enable information about Higgs from multiple channels and multiple experiments to be accumulated.

7 - Deep Learning

Introduction to Deep Learning {#sec:534-intro-to-dnn}

In this tutorial we will learn the fist lab on deep neural networks. Basic classification using deep learning will be discussed in this chapter.

MNIST Classification Version 1

Using Cloudmesh Common

Here we do a simple benchmark. We calculate compile time, train time, test time and data loading time for this example. Installing cloudmesh-common library is the first step. Focus on this section because the ** Assignment 4 ** will be focused on the content of this lab.

!pip install cloudmesh-common

Collecting cloudmesh-common

Downloading https://files.pythonhosted.org/packages/42/72/3c4aabce294273db9819be4a0a350f506d2b50c19b7177fb6cfe1cbbfe63/cloudmesh_common-4.2.13-py2.py3-none-any.whl (55kB)

|████████████████████████████████| 61kB 4.1MB/s

Requirement already satisfied: future in /usr/local/lib/python3.6/dist-packages (from cloudmesh-common) (0.16.0)

Collecting pathlib2 (from cloudmesh-common)

Downloading https://files.pythonhosted.org/packages/e9/45/9c82d3666af4ef9f221cbb954e1d77ddbb513faf552aea6df5f37f1a4859/pathlib2-2.3.5-py2.py3-none-any.whl

Requirement already satisfied: python-dateutil in /usr/local/lib/python3.6/dist-packages (from cloudmesh-common) (2.5.3)

Collecting simplejson (from cloudmesh-common)

Downloading https://files.pythonhosted.org/packages/e3/24/c35fb1c1c315fc0fffe61ea00d3f88e85469004713dab488dee4f35b0aff/simplejson-3.16.0.tar.gz (81kB)

|████████████████████████████████| 81kB 10.6MB/s

Collecting python-hostlist (from cloudmesh-common)

Downloading https://files.pythonhosted.org/packages/3d/0f/1846a7a0bdd5d890b6c07f34be89d1571a6addbe59efe59b7b0777e44924/python-hostlist-1.18.tar.gz

Requirement already satisfied: pathlib in /usr/local/lib/python3.6/dist-packages (from cloudmesh-common) (1.0.1)

Collecting colorama (from cloudmesh-common)

Downloading https://files.pythonhosted.org/packages/4f/a6/728666f39bfff1719fc94c481890b2106837da9318031f71a8424b662e12/colorama-0.4.1-py2.py3-none-any.whl

Collecting oyaml (from cloudmesh-common)

Downloading https://files.pythonhosted.org/packages/00/37/ec89398d3163f8f63d892328730e04b3a10927e3780af25baf1ec74f880f/oyaml-0.9-py2.py3-none-any.whl

Requirement already satisfied: humanize in /usr/local/lib/python3.6/dist-packages (from cloudmesh-common) (0.5.1)

Requirement already satisfied: psutil in /usr/local/lib/python3.6/dist-packages (from cloudmesh-common) (5.4.8)

Requirement already satisfied: six in /usr/local/lib/python3.6/dist-packages (from pathlib2->cloudmesh-common) (1.12.0)

Requirement already satisfied: pyyaml in /usr/local/lib/python3.6/dist-packages (from oyaml->cloudmesh-common) (3.13)

Building wheels for collected packages: simplejson, python-hostlist

Building wheel for simplejson (setup.py) ... done

Created wheel for simplejson: filename=simplejson-3.16.0-cp36-cp36m-linux_x86_64.whl size=114018 sha256=a6f35adb86819ff3de6c0afe475229029305b1c55c5a32b442fe94cda9500464

Stored in directory: /root/.cache/pip/wheels/5d/1a/1e/0350bb3df3e74215cd91325344cc86c2c691f5306eb4d22c77

Building wheel for python-hostlist (setup.py) ... done

Created wheel for python-hostlist: filename=python_hostlist-1.18-cp36-none-any.whl size=38517 sha256=71fbb29433b52fab625e17ef2038476b910bc80b29a822ed00a783d3b1fb73e4

Stored in directory: /root/.cache/pip/wheels/56/db/1d/b28216dccd982a983d8da66572c497d6a2e485eba7c4d6cba3

Successfully built simplejson python-hostlist

Installing collected packages: pathlib2, simplejson, python-hostlist, colorama, oyaml, cloudmesh-common

Successfully installed cloudmesh-common-4.2.13 colorama-0.4.1 oyaml-0.9 pathlib2-2.3.5 python-hostlist-1.18 simplejson-3.16.0

In this lesson we discuss in how to create a simple IPython Notebook to solve an image classification problem. MNIST contains a set of pictures

! python3 --version

Python 3.6.8

! pip install tensorflow-gpu==1.14.0

Collecting tensorflow-gpu==1.14.0

Downloading https://files.pythonhosted.org/packages/76/04/43153bfdfcf6c9a4c38ecdb971ca9a75b9a791bb69a764d652c359aca504/tensorflow_gpu-1.14.0-cp36-cp36m-manylinux1_x86_64.whl (377.0MB)

|████████████████████████████████| 377.0MB 77kB/s

Requirement already satisfied: six>=1.10.0 in /usr/local/lib/python3.6/dist-packages (from tensorflow-gpu==1.14.0) (1.12.0)

Requirement already satisfied: grpcio>=1.8.6 in /usr/local/lib/python3.6/dist-packages (from tensorflow-gpu==1.14.0) (1.15.0)

Requirement already satisfied: protobuf>=3.6.1 in /usr/local/lib/python3.6/dist-packages (from tensorflow-gpu==1.14.0) (3.7.1)

Requirement already satisfied: keras-applications>=1.0.6 in /usr/local/lib/python3.6/dist-packages (from tensorflow-gpu==1.14.0) (1.0.8)

Requirement already satisfied: gast>=0.2.0 in /usr/local/lib/python3.6/dist-packages (from tensorflow-gpu==1.14.0) (0.2.2)

Requirement already satisfied: astor>=0.6.0 in /usr/local/lib/python3.6/dist-packages (from tensorflow-gpu==1.14.0) (0.8.0)

Requirement already satisfied: absl-py>=0.7.0 in /usr/local/lib/python3.6/dist-packages (from tensorflow-gpu==1.14.0) (0.8.0)

Requirement already satisfied: wrapt>=1.11.1 in /usr/local/lib/python3.6/dist-packages (from tensorflow-gpu==1.14.0) (1.11.2)

Requirement already satisfied: wheel>=0.26 in /usr/local/lib/python3.6/dist-packages (from tensorflow-gpu==1.14.0) (0.33.6)

Requirement already satisfied: tensorflow-estimator 1.15.0rc0,>=1.14.0rc0 in /usr/local/lib/python3.6/dist-packages (from tensorflow-gpu==1.14.0) (1.14.0)

Requirement already satisfied: tensorboard 1.15.0,>=1.14.0 in /usr/local/lib/python3.6/dist-packages (from tensorflow-gpu==1.14.0) (1.14.0)

Requirement already satisfied: numpy 2.0,>=1.14.5 in /usr/local/lib/python3.6/dist-packages (from tensorflow-gpu==1.14.0) (1.16.5)

Requirement already satisfied: termcolor>=1.1.0 in /usr/local/lib/python3.6/dist-packages (from tensorflow-gpu==1.14.0) (1.1.0)

Requirement already satisfied: keras-preprocessing>=1.0.5 in /usr/local/lib/python3.6/dist-packages (from tensorflow-gpu==1.14.0) (1.1.0)

Requirement already satisfied: google-pasta>=0.1.6 in /usr/local/lib/python3.6/dist-packages (from tensorflow-gpu==1.14.0) (0.1.7)

Requirement already satisfied: setuptools in /usr/local/lib/python3.6/dist-packages (from protobuf>=3.6.1->tensorflow-gpu==1.14.0) (41.2.0)

Requirement already satisfied: h5py in /usr/local/lib/python3.6/dist-packages (from keras-applications>=1.0.6->tensorflow-gpu==1.14.0) (2.8.0)

Requirement already satisfied: markdown>=2.6.8 in /usr/local/lib/python3.6/dist-packages (from tensorboard 1.15.0,>=1.14.0->tensorflow-gpu==1.14.0) (3.1.1)

Requirement already satisfied: werkzeug>=0.11.15 in /usr/local/lib/python3.6/dist-packages (from tensorboard 1.15.0,>=1.14.0->tensorflow-gpu==1.14.0) (0.15.6)

Installing collected packages: tensorflow-gpu

Successfully installed tensorflow-gpu-1.14.0

Import Libraries

Note: https://python-future.org/quickstart.html

from __future__ import absolute_import

from __future__ import division

from __future__ import print_function

import time

import numpy as np

from keras.models import Sequential

from keras.layers import Dense, Activation, Dropout

from keras.utils import to_categorical, plot_model

from keras.datasets import mnist

from cloudmesh.common.StopWatch import StopWatch

Using TensorFlow backend.

Pre-process data

Load data

First we load the data from the inbuilt mnist dataset from Keras

StopWatch.start("data-load")

(x_train, y_train), (x_test, y_test) = mnist.load_data()

StopWatch.stop("data-load")

Downloading data from https://s3.amazonaws.com/img-datasets/mnist.npz

11493376/11490434 [==============================] - 1s 0us/step

Identify Number of Classes

As this is a number classification problem. We need to know how many classes are there. So we’ll count the number of unique labels.

num_labels = len(np.unique(y_train))

Convert Labels To One-Hot Vector

|Exercise MNIST_V1.0.0: Understand what is an one-hot vector?

y_train = to_categorical(y_train)

y_test = to_categorical(y_test)

Image Reshaping

The training model is designed by considering the data as a vector. This is a model dependent modification. Here we assume the image is a squared shape image.

image_size = x_train.shape[1]

input_size = image_size * image_size

Resize and Normalize

The next step is to continue the reshaping to a fit into a vector and normalize the data. Image values are from 0 - 255, so an easy way to normalize is to divide by the maximum value.

|Execrcise MNIST_V1.0.1: Suggest another way to normalize the data preserving the accuracy or improving the accuracy.

x_train = np.reshape(x_train, [-1, input_size])

x_train = x_train.astype('float32') / 255

x_test = np.reshape(x_test, [-1, input_size])

x_test = x_test.astype('float32') / 255

Create a Keras Model

Keras is a neural network library. Most important thing with Keras is the way we design the neural network.

In this model we have a couple of ideas to understand.

|Exercise MNIST_V1.1.0: Find out what is a dense layer?

A simple model can be initiated by using an Sequential instance in Keras. For this instance we add a single layer.

- Dense Layer

- Activation Layer (Softmax is the activation function)

Dense layer and the layer followed by it is fully connected. For instance the number of hidden units used here is 64 and the following layer is a dense layer followed by an activation layer.

|Execrcise MNIST_V1.2.0: Find out what is the use of an activation function. Find out why, softmax was used as the last layer.

batch_size = 4

hidden_units = 64

model = Sequential()

model.add(Dense(hidden_units, input_dim=input_size))

model.add(Dense(num_labels))

model.add(Activation('softmax'))

model.summary()

plot_model(model, to_file='mnist_v1.png', show_shapes=True)

WARNING:tensorflow:From /usr/local/lib/python3.6/dist-packages/keras/backend/tensorflow_backend.py:66: The name tf.get_default_graph is deprecated. Please use tf.compat.v1.get_default_graph instead.

WARNING:tensorflow:From /usr/local/lib/python3.6/dist-packages/keras/backend/tensorflow_backend.py:541: The name tf.placeholder is deprecated. Please use tf.compat.v1.placeholder instead.

WARNING:tensorflow:From /usr/local/lib/python3.6/dist-packages/keras/backend/tensorflow_backend.py:4432: The name tf.random_uniform is deprecated. Please use tf.random.uniform instead.

Model: "sequential_1"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

dense_1 (Dense) (None, 64) 50240

_________________________________________________________________

dense_2 (Dense) (None, 10) 650

_________________________________________________________________

activation_1 (Activation) (None, 10) 0

=================================================================

Total params: 50,890

Trainable params: 50,890

Non-trainable params: 0

_________________________________________________________________

Compile and Train

A keras model need to be compiled before it can be used to train the model. In the compile function, you can provide the optimization that you want to add, metrics you expect and the type of loss function you need to use.

Here we use the adam optimizer, a famous optimizer used in neural networks.

Exercise MNIST_V1.3.0: Find 3 other optimizers used on neural networks.

The loss funtion we have used is the categorical_crossentropy.

Exercise MNIST_V1.4.0: Find other loss functions provided in keras. Your answer can limit to 1 or more.

Once the model is compiled, then the fit function is called upon passing the number of epochs, traing data and batch size.

The batch size determines the number of elements used per minibatch in optimizing the function.

Note: Change the number of epochs, batch size and see what happens.

Exercise MNIST_V1.5.0: Figure out a way to plot the loss function value. You can use any method you like.

StopWatch.start("compile")

model.compile(loss='categorical_crossentropy',

optimizer='adam',

metrics=['accuracy'])

StopWatch.stop("compile")

StopWatch.start("train")

model.fit(x_train, y_train, epochs=1, batch_size=batch_size)

StopWatch.stop("train")

WARNING:tensorflow:From /usr/local/lib/python3.6/dist-packages/keras/optimizers.py:793: The name tf.train.Optimizer is deprecated. Please use tf.compat.v1.train.Optimizer instead.

WARNING:tensorflow:From /usr/local/lib/python3.6/dist-packages/keras/backend/tensorflow_backend.py:3576: The name tf.log is deprecated. Please use tf.math.log instead.

WARNING:tensorflow:From

/usr/local/lib/python3.6/dist-packages/tensorflow/python/ops/math_grad.py:1250:

add_dispatch_support. locals.wrapper (from tensorflow.python.ops.array_ops) is deprecated and will be removed in a future version.

Instructions for updating:

Use tf.where in 2.0, which has the same broadcast rule as np.where

WARNING:tensorflow:From /usr/local/lib/python3.6/dist-packages/keras/backend/tensorflow_backend.py:1033: The name tf.assign_add is deprecated. Please use tf.compat.v1.assign_add instead.

Epoch 1/1

60000/60000 [==============================] - 20s 336us/step - loss: 0.3717 - acc: 0.8934

Testing

Now we can test the trained model. Use the evaluate function by passing test data and batch size and the accuracy and the loss value can be retrieved.

Exercise MNIST_V1.6.0: Try to optimize the network by changing the number of epochs, batch size and record the best accuracy that you can gain

StopWatch.start("test")

loss, acc = model.evaluate(x_test, y_test, batch_size=batch_size)

print("\nTest accuracy: %.1f%%" % (100.0 * acc))

StopWatch.stop("test")

10000/10000 [==============================] - 1s 138us/step

Test accuracy: 91.0%

StopWatch.benchmark()

+---------------------+------------------------------------------------------------------+

| Machine Attribute | Value |

+---------------------+------------------------------------------------------------------+

| BUG_REPORT_URL | "https://bugs.launchpad.net/ubuntu/" |

| DISTRIB_CODENAME | bionic |

| DISTRIB_DESCRIPTION | "Ubuntu 18.04.3 LTS" |

| DISTRIB_ID | Ubuntu |

| DISTRIB_RELEASE | 18.04 |

| HOME_URL | "https://www.ubuntu.com/" |

| ID | ubuntu |

| ID_LIKE | debian |

| NAME | "Ubuntu" |

| PRETTY_NAME | "Ubuntu 18.04.3 LTS" |

| PRIVACY_POLICY_URL | "https://www.ubuntu.com/legal/terms-and-policies/privacy-policy" |

| SUPPORT_URL | "https://help.ubuntu.com/" |

| UBUNTU_CODENAME | bionic |

| VERSION | "18.04.3 LTS (Bionic Beaver)" |

| VERSION_CODENAME | bionic |

| VERSION_ID | "18.04" |

| cpu_count | 2 |

| mac_version | |

| machine | ('x86_64',) |

| mem_active | 973.8 MiB |

| mem_available | 11.7 GiB |

| mem_free | 5.1 GiB |

| mem_inactive | 6.3 GiB |

| mem_percent | 8.3% |

| mem_total | 12.7 GiB |

| mem_used | 877.3 MiB |

| node | ('8281485b0a16',) |

| platform | Linux-4.14.137+-x86_64-with-Ubuntu-18.04-bionic |

| processor | ('x86_64',) |

| processors | Linux |

| python | 3.6.8 (default, Jan 14 2019, 11:02:34) |

| | [GCC 8.0.1 20180414 (experimental) [trunk revision 259383]] |

| release | ('4.14.137+',) |

| sys | linux |

| system | Linux |

| user | |

| version | #1 SMP Thu Aug 8 02:47:02 PDT 2019 |

| win_version | |

+---------------------+------------------------------------------------------------------+

+-----------+-------+---------------------+-----+-------------------+------+--------+-------------+-------------+

| timer | time | start | tag | node | user | system | mac_version | win_version |

+-----------+-------+---------------------+-----+-------------------+------+--------+-------------+-------------+

| data-load | 1.335 | 2019-09-27 13:37:41 | | ('8281485b0a16',) | | Linux | | |

| compile | 0.047 | 2019-09-27 13:37:43 | | ('8281485b0a16',) | | Linux | | |

| train | 20.58 | 2019-09-27 13:37:43 | | ('8281485b0a16',) | | Linux | | |

| test | 1.393 | 2019-09-27 13:38:03 | | ('8281485b0a16',) | | Linux | | |

+-----------+-------+---------------------+-----+-------------------+------+--------+-------------+-------------+

timer,time,starttag,node,user,system,mac_version,win_version

data-load,1.335,None,('8281485b0a16',),,Linux,,

compile,0.047,None,('8281485b0a16',),,Linux,,

train,20.58,None,('8281485b0a16',),,Linux,,

test,1.393,None,('8281485b0a16',),,Linux,,

Final Note

This programme can be defined as a hello world programme in deep learning. Objective of this exercise is not to teach you the depths of deep learning. But to teach you basic concepts that may need to design a simple network to solve a problem. Before running the whole code, read all the instructions before a code section. Solve all the problems noted in bold text with Exercise keyword (Exercise MNIST_V1.0 - MNIST_V1.6). Write your answers and submit a PDF by following the Assignment 5. Include codes or observations you made on those sections.

Reference:

8 - Sports

Sports with Big Data Applications {#sec:534-week7}

E534 2019 Big Data Applications and Analytics Sports Informatics Part I (Unit 32) Section Summary (Parts I, II, III): Sports sees significant growth in analytics with pervasive statistics shifting to more sophisticated measures. We start with baseball as game is built around segments dominated by individuals where detailed (video/image) achievement measures including PITCHf/x and FIELDf/x are moving field into big data arena. There are interesting relationships between the economics of sports and big data analytics. We look at Wearables and consumer sports/recreation. The importance of spatial visualization is discussed. We look at other Sports: Soccer, Olympics, NFL Football, Basketball, Tennis and Horse Racing.

Unit 32

Unit Summary (PartI, Unit 32): This unit discusses baseball starting with the movie Moneyball and the 2002-2003 Oakland Athletics. Unlike sports like basketball and soccer, most baseball action is built around individuals often interacting in pairs. This is much easier to quantify than many player phenomena in other sports. We discuss Performance-Dollar relationship including new stadiums and media/advertising. We look at classic baseball averages and sophisticated measures like Wins Above Replacement.

Lesson Summaries

BDAA 32.1 - E534 Sports - Introduction and Sabermetrics (Baseball Informatics) Lesson

Introduction to all Sports Informatics, Moneyball The 2002-2003 Oakland Athletics, Diamond Dollars economic model of baseball, Performance - Dollar relationship, Value of a Win.

BDAA 32.2 - E534 Sports - Basic Sabermetrics

Different Types of Baseball Data, Sabermetrics, Overview of all data, Details of some statistics based on basic data, OPS, wOBA, ERA, ERC, FIP, UZR.

BDAA 32.3 - E534 Sports - Wins Above Replacement

Wins above Replacement WAR, Discussion of Calculation, Examples, Comparisons of different methods, Coefficient of Determination, Another, Sabermetrics Example, Summary of Sabermetrics.

Unit 33

E534 2019 Big Data Applications and Analytics Sports Informatics Part II (Unit 33) Section Summary (Parts I, II, III): Sports sees significant growth in analytics with pervasive statistics shifting to more sophisticated measures. We start with baseball as game is built around segments dominated by individuals where detailed (video/image) achievement measures including PITCHf/x and FIELDf/x are moving field into big data arena. There are interesting relationships between the economics of sports and big data analytics. We look at Wearables and consumer sports/recreation. The importance of spatial visualization is discussed. We look at other Sports: Soccer, Olympics, NFL Football, Basketball, Tennis and Horse Racing.

Unit Summary (Part II, Unit 33): This unit discusses ‘advanced sabermetrics’ covering advances possible from using video from PITCHf/X, FIELDf/X, HITf/X, COMMANDf/X and MLBAM.

BDAA 33.1 - E534 Sports - Pitching Clustering

A Big Data Pitcher Clustering method introduced by Vince Gennaro, Data from Blog and video at 2013 SABR conference

BDAA 33.2 - E534 Sports - Pitcher Quality

Results of optimizing match ups, Data from video at 2013 SABR conference.

BDAA 33.3 - E534 Sports - PITCHf/X

Examples of use of PITCHf/X.

BDAA 33.4 - E534 Sports - Other Video Data Gathering in Baseball

FIELDf/X, MLBAM, HITf/X, COMMANDf/X.

Unit 34

E534 2019 Big Data Applications and Analytics Sports Informatics Part III (Unit 34). Section Summary (Parts I, II, III): Sports sees significant growth in analytics with pervasive statistics shifting to more sophisticated measures. We start with baseball as game is built around segments dominated by individuals where detailed (video/image) achievement measures including PITCHf/x and FIELDf/x are moving field into big data arena. There are interesting relationships between the economics of sports and big data analytics. We look at Wearables and consumer sports/recreation. The importance of spatial visualization is discussed. We look at other Sports: Soccer, Olympics, NFL Football, Basketball, Tennis and Horse Racing.

Unit Summary (Part III, Unit 34): We look at Wearables and consumer sports/recreation. The importance of spatial visualization is discussed. We look at other Sports: Soccer, Olympics, NFL Football, Basketball, Tennis and Horse Racing.

Lesson Summaries

BDAA 34.1 - E534 Sports - Wearables

Consumer Sports, Stake Holders, and Multiple Factors.

BDAA 34.2 - E534 Sports - Soccer and the Olympics

Soccer, Tracking Players and Balls, Olympics.

BDAA 34.3 - E534 Sports - Spatial Visualization in NFL and NBA

NFL, NBA, and Spatial Visualization.

BDAA 34.4 - E534 Sports - Tennis and Horse Racing

Tennis, Horse Racing, and Continued Emphasis on Spatial Visualization.

9 - Deep Learning (Cont. I)

Introduction to Deep Learning Part I

E534 2019 BDAA DL Section Intro Unit: E534 2019 Big Data Applications and Analytics Introduction to Deep Learning Part I (Unit Intro) Section Summary

This section covers the growing importance of the use of Deep Learning in Big Data Applications and Analytics. The Intro Unit is an introduction to the technology with examples incidental. It includes an introducton to the laboratory where we use Keras and Tensorflow. The Tech unit covers the deep learning technology in more detail. The Application Units cover deep learning applications at different levels of sophistication.

Intro Unit Summary

This unit is an introduction to deep learning with four major lessons

Optimization

Lesson Summaries Optimization: Overview of Optimization Opt lesson overviews optimization with a focus on issues of importance for deep learning. Gives a quick review of Objective Function, Local Minima (Optima), Annealing, Everything is an optimization problem with examples, Examples of Objective Functions, Greedy Algorithms, Distances in funny spaces, Discrete or Continuous Parameters, Genetic Algorithms, Heuristics.

First Deep Learning Example

FirstDL: Your First Deep Learning Example FirstDL Lesson gives an experience of running a non trivial deep learning application. It goes through the identification of numbers from NIST database using a Multilayer Perceptron using Keras+Tensorflow running on Google Colab

Deep Learning Basics

DLBasic: Basic Terms Used in Deep Learning DLBasic lesson reviews important Deep Learning topics including Activation: (ReLU, Sigmoid, Tanh, Softmax), Loss Function, Optimizer, Stochastic Gradient Descent, Back Propagation, One-hot Vector, Vanishing Gradient, Hyperparameter

Deep Learning Types

DLTypes: Types of Deep Learning: Summaries DLtypes Lesson reviews important Deep Learning neural network architectures including Multilayer Perceptron, CNN Convolutional Neural Network, Dropout for regularization, Max Pooling, RNN Recurrent Neural Networks, LSTM: Long Short Term Memory, GRU Gated Recurrent Unit, (Variational) Autoencoders, Transformer and Sequence to Sequence methods, GAN Generative Adversarial Network, (D)RL (Deep) Reinforcement Learning.

10 - Deep Learning (Cont. II)

Introduction to Deep Learning Part II: Applications

This section covers the growing importance of the use of Deep Learning in Big Data Applications and Analytics. The Intro Unit is an introduction to the technology with examples incidental. The MNIST Unit covers an example on Google Colaboratory. The Technology Unit covers deep learning approaches in more detail than the Intro Unit. The Tech Unit covers the deep learning technology in more detail. The Application Unit cover deep learning applications at different levels of sophistication.

Applications of Deep Learning Unit Summary This unit is an introduction to deep learning with currently 7 lessons

Recommender: Overview of Recommender Systems

Recommender engines used to be dominated by collaborative filtering using matrix factorization and k’th nearest neighbor approaches. Large systems like YouTube and Netflix now use deep learning. We look at sysyems like Spotify that use multiple sources of information.

Retail: Overview of AI in Retail Sector (e-commerce)

The retail sector can use AI in Personalization, Search and Chatbots. They must adopt AI to survive. We also discuss how to be a seller on Amazon

RideHailing: Overview of AI in Ride Hailing Industry (Uber, Lyft, Didi)

The Ride Hailing industry will grow as it becomes main mobility method for many customers. Their technology investment includes deep learning for matching drivers and passengers. There is huge overlap with larger area of AI in transportation.

SelfDriving: Overview of AI in Self (AI-Assisted) Driving cars

Automobile Industry needs to remake itself as mobility companies. Basic automotive industry flat to down but AI can improve productivity. Lesson also discusses electric vehicles and drones

Imaging: Overview of Scene Understanding

Imaging is area where convolutional neural nets and deep learning has made amazing progress. all aspects of imaging are now dominated by deep learning. We discuss the impact of Image Net in detail

MainlyMedicine: Overview of AI in Health and Telecommunication

Telecommunication Industry has little traditional growth to look forward to. It can use AI in its operation and exploit trove of Big Data it possesses. Medicine has many breakthrough opportunities but progress hard – partly due to data privacy restrictions. Traditional Bioinformatics areas progress but slowly; pathology is based on imagery and making much better progress with deep learning

BankingFinance: Overview of Banking and Finance

This FinTech sector has huge investments (larger than other applications we studied)and we can expect all aspects of Banking and Finance to be remade with online digital Banking as a Service. It is doubtful that traditional banks will thrive

11 - Introduction to Deep Learning (III)

Usage of deep learning algorithm is one of the demanding skills needed in this decade and the coming decade. Providing a hands on experience in using deep learning applications is one of the main goals of this lecture series. Let’s get started.

Deep Learning Algorithm Part 1

In this part of the lecture series, the idea is to provide an understanding on the usage of various deep learning algorithms. In this lesson, we talk about different algorithms in Deep Learning world. In this lesson we discuss a multi-layer perceptron and convolutional neural networks. Here we use MNIST classification problem and solve it using MLP and CNN.

Deep Learning Algorithms Part 2

In this lesson, we continue our study on a deep learning algorithms. We use Recurrent Neural Network related examples to show case how it can be applied to do MNIST classfication. We showcase how RNN can be applied to solve this problem.

Deep Learning Algorithms Part 3

CNN is one of the most prominent algorithms that has been used in the deep learning world in the last decade. A lots of applications has been done using CNN. Most of these applications deal with images, videos, etc. In this lesson we continue the lesson on convolution neural networks. Here we discuss a brief history on CNN.

Deep Learning Algorithms Part 4

In this lesson we continue our study on CNN by understanding how historical findings supported the upliftment of the Convolutional Neural Networks. And also we discuss why CNN has been used for various applications in various fields.

Deep Learning Algorithms Part 5

In this lesson we discuss about auto-encoders. This is one of the highly used deep learning based models in signal denoising, image denoising. Here we portray how an auto-encoder can be used to do such tasks.

Deep Learning Algorithms Part 6

In this lesson we discuss one of the most famous deep neural network architecture, Generative Adversarial Networks. This deep learning model has the capability of generating new outputs from existing knowledge. A GAN model is more like a counter-fitter who is trying to improve itself to generate best counterfits.

Additional Material

We have included more information on different types of deep neural networks and their usage. A summary of all the topics discussed under deep learning can be found in the following slide deck. Please refer it to get more information. Some of these information can help for writing term papers and projects.

12 - Cloud Computing

E534 Cloud Computing Unit

:orange_book: Full Slide Deck https://drive.google.com/open?id=1e61jrgTSeG8wQvQ2v6Zsp5AA31KCZPEQ

This page https://docs.google.com/document/d/1D8bEzKe9eyQfbKbpqdzgkKnFMCBT1lWildAVdoH5hYY/edit?usp=sharing

Overall Summary

Video: https://drive.google.com/open?id=1Iq-sKUP28AiTeDU3cW_7L1fEQ2hqakae

Video: https://drive.google.com/open?id=1Iq-sKUP28AiTeDU3cW_7L1fEQ2hqakae

:orange_book: Slides https://drive.google.com/open?id=1MLYwAM6MrrZSKQjKm570mNtyNHiWSCjC

Defining Clouds I:

Video https://drive.google.com/open?id=15TbpDGR2VOy5AAYb_o4740enMZKiVTSz

Video https://drive.google.com/open?id=15TbpDGR2VOy5AAYb_o4740enMZKiVTSz

:orange_book: Slides https://drive.google.com/open?id=1CMqgcpNwNiMqP8TZooqBMhwFhu2EAa3C

- Basic definition of cloud and two very simple examples of why virtualization is important.

- How clouds are situated wrt HPC and supercomputers

- Why multicore chips are important

- Typical data center

Defining Clouds II:

Video https://drive.google.com/open?id=1BvJCqBQHLMhrPrUsYvGWoq1nk7iGD9cd

Video https://drive.google.com/open?id=1BvJCqBQHLMhrPrUsYvGWoq1nk7iGD9cd

:orange_book: Slides https://drive.google.com/open?id=1_rczdp74g8hFnAvXQPVfZClpvoB_B3RN

- Service-oriented architectures: Software services as Message-linked computing capabilities

- The different aaS’s: Network, Infrastructure, Platform, Software

- The amazing services that Amazon AWS and Microsoft Azure have

- Initial Gartner comments on clouds (they are now the norm) and evolution of servers; serverless and microservices

Defining Clouds III:

Video https://drive.google.com/open?id=1MjIU3N2PX_3SsYSN7eJtAlHGfdePbKEL

Video https://drive.google.com/open?id=1MjIU3N2PX_3SsYSN7eJtAlHGfdePbKEL

:orange_book: Slides https://drive.google.com/open?id=1cDJhE86YRAOCPCAz4dVv2ieq-4SwTYQW

- Cloud Market Share

- How important are they?

- How much money do they make?

Virtualization:

Video https://drive.google.com/open?id=1-zd6wf3zFCaTQFInosPHuHvcVrLOywsw

Video https://drive.google.com/open?id=1-zd6wf3zFCaTQFInosPHuHvcVrLOywsw

:orange_book: Slides https://drive.google.com/open?id=1_-BIAVHSgOnWQmMfIIC61wH-UBYywluO

- Virtualization Technologies, Hypervisors and the different approaches

- KVM Xen, Docker and Openstack

Cloud Infrastructure I:

Video https://drive.google.com/open?id=1CIVNiqu88yeRkeU5YOW3qNJbfQHwfBzE

Video https://drive.google.com/open?id=1CIVNiqu88yeRkeU5YOW3qNJbfQHwfBzE

:orange_book: Slides https://drive.google.com/open?id=11JRZe2RblX2MnJEAyNwc3zup6WS8lU-V

- Comments on trends in the data center and its technologies

- Clouds physically across the world

- Green computing

- Amount of world’s computing ecosystem in clouds

Cloud Infrastructure II:

Videos https://drive.google.com/open?id=1yGR0YaqSoZ83m1_Kz7q7esFrrxcFzVgl

Videos https://drive.google.com/open?id=1yGR0YaqSoZ83m1_Kz7q7esFrrxcFzVgl

:orange_book: Slides https://drive.google.com/open?id=1L6fnuALdW3ZTGFvu4nXsirPAn37ZMBEb

- Gartner hypecycle and priority matrix on Infrastructure Strategies and Compute Infrastructure

- Containers compared to virtual machines

- The emergence of artificial intelligence as a dominant force

Cloud Software:

Video https://drive.google.com/open?id=14HISqj17Ihom8G6v9KYR2GgAyjeK1mOp

Video https://drive.google.com/open?id=14HISqj17Ihom8G6v9KYR2GgAyjeK1mOp

:orange_book: Slides https://drive.google.com/open?id=10TaEQE9uEPBFtAHpCAT_1akCYbvlMCPg

- HPC-ABDS with over 350 software packages and how to use each of 21 layers

- Google’s software innovations

- MapReduce in pictures

- Cloud and HPC software stacks compared

- Components need to support cloud/distributed system programming

Cloud Applications I: Research applications

Video https://drive.google.com/open?id=11zuqeUbaxyfpONOmHRaJQinc4YSZszri

Video https://drive.google.com/open?id=11zuqeUbaxyfpONOmHRaJQinc4YSZszri

:orange_book: Slides https://drive.google.com/open?id=1hUgC82FLutp32rICEbPJMgHaadTlOOJv

- Clouds in science where the area called cyberinfrastructure

Cloud Applications II: Few key types

Video https://drive.google.com/open?id=1S2-MgshCSqi9a6_tqEVktktN4Nf6Hj4d

Video https://drive.google.com/open?id=1S2-MgshCSqi9a6_tqEVktktN4Nf6Hj4d

:orange_book: Slides https://drive.google.com/open?id=1KlYnTZgRzqjnG1g-Mf8NTvw1k8DYUCbw

- Internet of Things

- Different types of MapReduce

Parallel Computing in Pictures

Video https://drive.google.com/open?id=1LSnVj0Vw2LXOAF4_CMvehkn0qMIr4y4J

Video https://drive.google.com/open?id=1LSnVj0Vw2LXOAF4_CMvehkn0qMIr4y4J

:orange_book: Slides https://drive.google.com/open?id=1IDozpqtGbTEzANDRt4JNb1Fhp7JCooZH

- Some useful analogies and principles

- Society and Building Hadrian’s wall

Parallel Computing in real world

Video https://drive.google.com/open?id=1d0pwvvQmm5VMyClm_kGlmB79H69ihHwk

Video https://drive.google.com/open?id=1d0pwvvQmm5VMyClm_kGlmB79H69ihHwk

:orange_book: Slides https://drive.google.com/open?id=1aPEIx98aDYaeJS-yY1JhqqnPPJbizDAJ

- Single Program/Instruction Multiple Data SIMD SPMD

- Parallel Computing in general

- Big Data and Simulations Compared

- What is hard to do?

Cloud Storage:

Video https://drive.google.com/open?id=1ukgyO048qX0uZ9sti3HxIDGscyKqeCaB

Video https://drive.google.com/open?id=1ukgyO048qX0uZ9sti3HxIDGscyKqeCaB

:orange_book: Slides https://drive.google.com/open?id=1rVRMcfrpFPpKVhw9VZ8I72TTW21QxzuI

- Cloud data approaches

- Repositories, File Systems, Data lakes

HPC and Clouds: The Branscomb Pyramid

Video https://drive.google.com/open?id=15rrCZ_yaMSpQNZg1lBs_YaOSPw1Rddog

Video https://drive.google.com/open?id=15rrCZ_yaMSpQNZg1lBs_YaOSPw1Rddog

:orange_book: Slides https://drive.google.com/open?id=1JRdtXWWW0qJrbWAXaHJHxDUZEhPCOK_C

- Supercomputers versus clouds

- Science Computing Environments

Comparison of Data Analytics with Simulation:

Video https://drive.google.com/open?id=1wmt7MQLz3Bf2mvLN8iHgXFHiuvGfyRKr

Video https://drive.google.com/open?id=1wmt7MQLz3Bf2mvLN8iHgXFHiuvGfyRKr

:orange_book: Slides https://drive.google.com/open?id=1vRv76LerhgJKUsGosXLVKq4s_wDqFlK4

- Structure of different applications for simulations and Big Data

- Software implications

- Languages

The Future:

Video https://drive.google.com/open?id=1A20g-rTYe0EKxMSX0HI4D8UyUDcq9IJc

Video https://drive.google.com/open?id=1A20g-rTYe0EKxMSX0HI4D8UyUDcq9IJc

:orange_book: Slides https://drive.google.com/open?id=1_vFA_SLsf4PQ7ATIxXpGPIPHawqYlV9K

- Gartner cloud computing hypecycle and priority matrix

- Hyperscale computing

- Serverless and FaaS

- Cloud Native

- Microservices

Fault Tolerance

Video https://drive.google.com/open?id=11hJA3BuT6pS9Ovv5oOWB3QOVgKG8vD24

Video https://drive.google.com/open?id=11hJA3BuT6pS9Ovv5oOWB3QOVgKG8vD24

:orange_book: Slides https://drive.google.com/open?id=1oNztdHQPDmj24NSGx1RzHa7XfZ5vqUZg

13 - Introduction to Cloud Computing

Introduction to Cloud Computing

This introduction to Cloud Computing covers all aspects of the field drawing on industry and academic advances. It makes use of analyses from the Gartner group on future Industry trends. The presentation is broken into 21 parts starting with a survey of all the material covered. Note this first part is A while the substance of the talk is in parts B to U.

Introduction - Part A {#s:cloud-fundamentals-a}

- Parts B to D define cloud computing, its key concepts and how it is situated in the data center space

- The next part E reviews virtualization technologies comparing containers and hypervisors

- Part F is the first on Gartner’s Hypecycles and especially those for emerging technologies in 2017 and 2016

- Part G is the second on Gartner’s Hypecycles with Emerging Technologies hypecycles and the Priority matrix at selected times 2008-2015

- Parts H and I cover Cloud Infrastructure with Comments on trends in the data center and its technologies and the Gartner hypecycle and priority matrix on Infrastructure Strategies and Compute Infrastructure

- Part J covers Cloud Software with HPC-ABDS(High Performance Computing enhanced Apache Big Data Stack) with over 350 software packages and how to use each of its 21 layers

- Part K is first on Cloud Applications covering those from industry and commercial usage patterns from NIST

- Part L is second on Cloud Applications covering those from science where area called cyberinfrastructure; we look at the science usage pattern from NIST

- Part M is third on Cloud Applications covering the characterization of applications using the NIST approach.

- Part N covers Clouds and Parallel Computing and compares Big Data and Simulations

- Part O covers Cloud storage: Cloud data approaches: Repositories, File Systems, Data lakes

- Part P covers HPC and Clouds with The Branscomb Pyramid and Supercomputers versus clouds

- Part Q compares Data Analytics with Simulation with application and software implications

- Part R compares Jobs from Computer Engineering, Clouds, Design and Data Science/Engineering

- Part S covers the Future with Gartner cloud computing hypecycle and priority matrix, Hyperscale computing, Serverless and FaaS, Cloud Native and Microservices

- Part T covers Security and Blockchain

- Part U covers fault-tolerance

This lecture describes the contents of the following 20 parts (B to U).

Introduction - Part B - Defining Clouds I {#s:cloud-fundamentals-b}

B: Defining Clouds I

- Basic definition of cloud and two very simple examples of why virtualization is important.

- How clouds are situated wrt HPC and supercomputers

- Why multicore chips are important

- Typical data center

Introduction - Part C - Defining Clouds II {#s:cloud-fundamentals-c}

C: Defining Clouds II

- Service-oriented architectures: Software services as Message-linked computing capabilities

- The different aaS’s: Network, Infrastructure, Platform, Software

- The amazing services that Amazon AWS and Microsoft Azure have

- Initial Gartner comments on clouds (they are now the norm) and evolution of servers; serverless and microservices

Introduction - Part D - Defining Clouds III {#s:cloud-fundamentals-d}

D: Defining Clouds III

- Cloud Market Share

- How important are they?

- How much money do they make?

Introduction - Part E - Virtualization {#s:cloud-fundamentals-e}

E: Virtualization

- Virtualization Technologies, Hypervisors and the different approaches

- KVM Xen, Docker and Openstack

- Several web resources are listed

Introduction - Part F - Technology Hypecycle I {#s:cloud-fundamentals-f}

F:Technology Hypecycle I

- Gartner’s Hypecycles and especially that for emerging technologies in 2017 and 2016

- The phases of hypecycles

- Priority Matrix with benefits and adoption time

- Today clouds have got through the cycle (they have emerged) but features like blockchain, serverless and machine learning are on cycle

- Hypecycle and Priority Matrix for Data Center Infrastructure 2017

Introduction - Part G - Technology Hypecycle II {#s:cloud-fundamentals-g}

G: Technology Hypecycle II

- Emerging Technologies hypecycles and Priority matrix at selected times 2008-2015

- Clouds star from 2008 to today

- They are mixed up with transformational and disruptive changes

- The route to Digital Business (2015)

Introduction - Part H - IaaS I {#s:cloud-fundamentals-h}

H: Cloud Infrastructure I

- Comments on trends in the data center and its technologies

- Clouds physically across the world

- Green computing and fraction of world’s computing ecosystem in clouds

Introduction - Part I - IaaS II {#s:cloud-fundamentals-i}

I: Cloud Infrastructure II

- Gartner hypecycle and priority matrix on Infrastructure Strategies and Compute Infrastructure

- Containers compared to virtual machines

- The emergence of artificial intelligence as a dominant force

Introduction - Part J - Cloud Software {#s:cloud-fundamentals-j}

J: Cloud Software

- HPC-ABDS(High Performance Computing enhanced Apache Big Data Stack) with over 350 software packages and how to use each of 21 layers

- Google’s software innovations

- MapReduce in pictures

- Cloud and HPC software stacks compared

- Components need to support cloud/distributed system programming

- Single Program/Instruction Multiple Data SIMD SPMD

Introduction - Part K - Applications I {#s:cloud-fundamentals-k}

K: Cloud Applications I

- Big Data in Industry/Social media; a lot of best examples have NOT been updated so some slides old but still make the correct points

- Some of the business usage patterns from NIST

Introduction - Part L - Applications II {#s:cloud-fundamentals-l}

L: Cloud Applications II

- Clouds in science where area called cyberinfrastructure;

- The science usage pattern from NIST

- Artificial Intelligence from Gartner

Introduction - Part M - Applications III {#s:cloud-fundamentals-m}

M: Cloud Applications III

- Characterize Applications using NIST approach

- Internet of Things

- Different types of MapReduce

Introduction - Part N - Parallelism {#s:cloud-fundamentals-n}

N: Clouds and Parallel Computing

- Parallel Computing in general

- Big Data and Simulations Compared

- What is hard to do?

Introduction - Part O - Storage {#s:cloud-fundamentals-o}

O: Cloud Storage

- Cloud data approaches

- Repositories, File Systems, Data lakes

Introduction - Part P - HPC in the Cloud {#s:cloud-fundamentals-p}

P: HPC and Clouds

- The Branscomb Pyramid

- Supercomputers versus clouds

- Science Computing Environments

Introduction - Part Q - Analytics and Simulation {#s:cloud-fundamentals-q}

Q: Comparison of Data Analytics with Simulation

- Structure of different applications for simulations and Big Data

- Software implications

- Languages

Introduction - Part R - Jobs {#s:cloud-fundamentals-r}

R: Availability of Jobs in different areas

- Computer Engineering

- Clouds

- Design

- Data Science/Engineering

Introduction - Part S - The Future {#s:cloud-fundamentals-s}

S: The Future

-

Gartner cloud computing hypecycle and priority matrix highlights:

- Hyperscale computing

- Serverless and FaaS

- Cloud Native

- Microservices

Introduction - Part T - Security {#s:cloud-fundamentals-t}

T: Security

- CIO Perspective

- Blockchain

Introduction - Part U - Fault Tolerance {#s:cloud-fundamentals-u}

U: Fault Tolerance

- S3 Fault Tolerance

- Application Requirements

© 2018 GitHub, Inc. Terms Privacy Security Status Help Contact GitHub Pricing API Training Blog About Press h to open a hovercard with more details.

14 - Assignments

Assignments

Due dates are on Canvas. Click on the links to checkout the assignment pages.

14.1 - Assignment 1

Assignment 1

In the first assignment you will be writing a technical document on the current technology trends that you’re pursuing and the trends that you would like to follow. In addition to this include some information about your background in programming and some projects that you have done. There is no strict format for this one, but we expect 2 page written document. Please submit a PDF.

14.2 - Assignment 2

Assignment 2

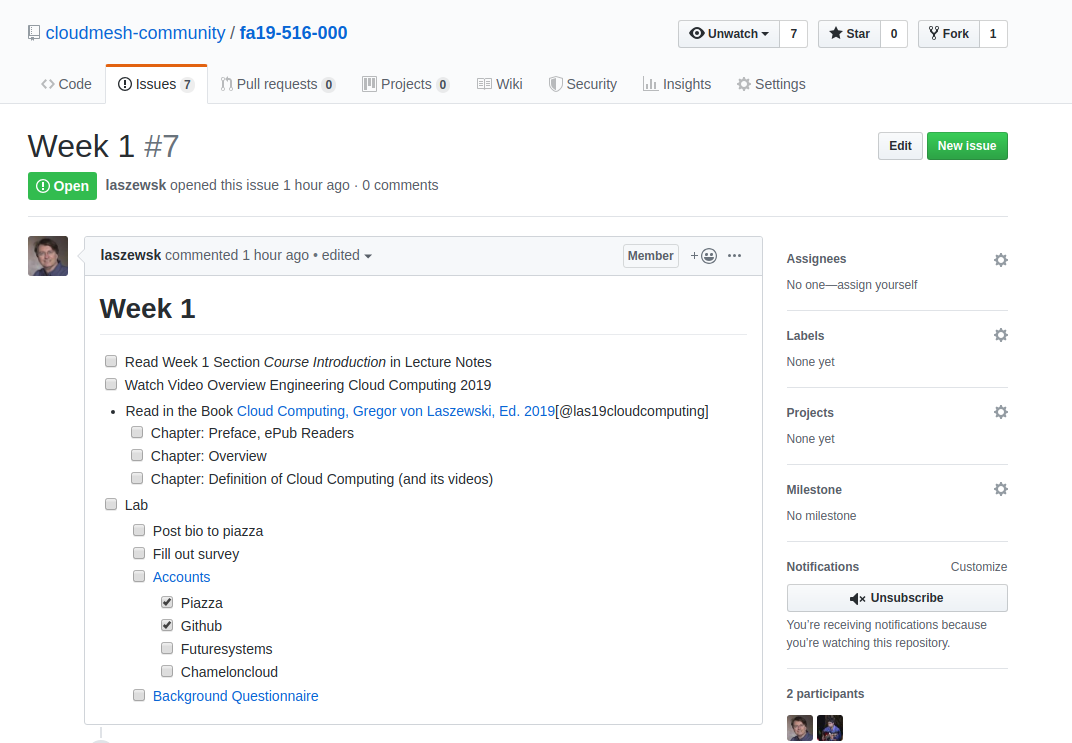

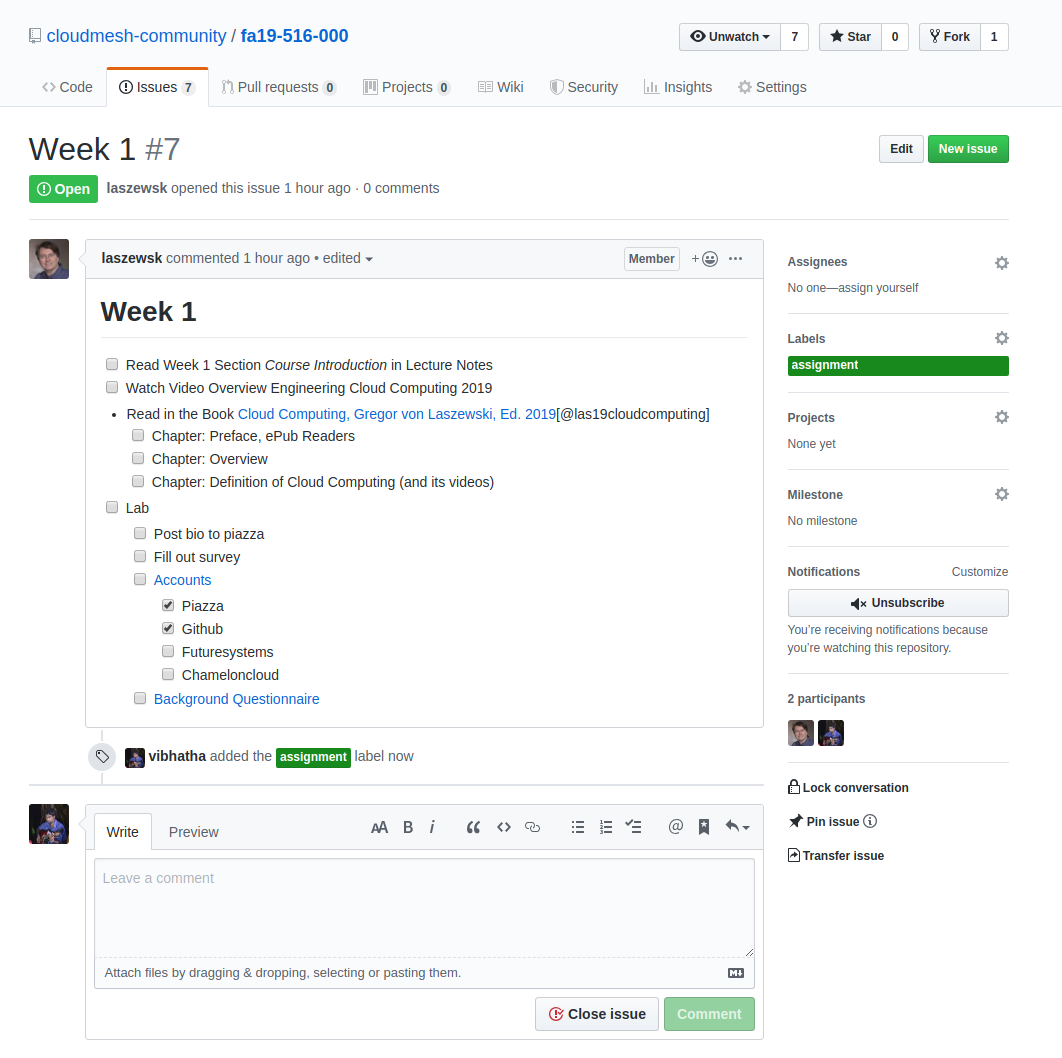

In the second assignment, you will be working on Week 1 (see @sec:534-week1) lecture videos. Objectives are as follows.

- Summarize what you have understood. (2 page)

- Select a subtopic that you are interested in and research on the current trends (1 page)

- Suggest ideas that could improve the existing work (imaginations and possibilities) (1 page)

For this assignment we expect a 4 page document. You can use a single column format for this document. Make sure you write exactly 4 pages. For your research section make sure you add citations to the sections that you are going to refer. If you have issues in how to do citations you can reach a TA to learn how to do that. We will try to include some chapters on how to do this in our handbook. Submissions are in pdf format only.

14.3 - Assignment 3

Assignment 3

In the third assignment, you will be working on (see @sec:534-week3) lecture videos. Objectives are as follows.

- Summarize what you have understood. (2 page)

- Select a subtopic that you are interested in and research on the current trends (1 page)

- Suggest ideas that could improve the existing work (imaginations and possibilities) (1 page)

For this assignment we expect a 4 page document. You can use a single column format for this document. Make sure you write exactly 4 pages. For your research section make sure you add citations to the sections that you are going to refer. If you have issues in how to do citations you can reach a TA to learn how to do that. We will try to include some chapters on how to do this in our handbook. Submissions are in pdf format only.

14.4 - Assignment 4

Assignment 4

In the fourth assignment, you will be working on (see @sec:534-week5) lecture videos. Objectives are as follows.

- Summarize what you have understood. (1 page)

- Select a subtopic that you are interested in and research on the current trends (0.5 page)

- Suggest ideas that could improve the existing work (imaginations and possibilities) (0.5 page)

- Summarize a specific video segment in the video lectures. To do this you need to follow these guidelines. Mention the video lecture name and section identification number. And also specify which range of minutes you have focused on the specific video lecture (2 pages).

For this assignment we expect a 4 page document. You can use a single column format for this document. Make sure you write exactly 4 pages. For your research section make sure you add citations to the sections that you are going to refer. If you have issues in how to do citations you can reach a TA to learn how to do that. We will try to include some chapters on how to do this in our handbook. Submissions are in pdf format only.

14.5 - Assignment 5

Assignment 5

In the fifth assignment, you will be working on (see @sec:534-intro-to-dnn) lecture videos. Objectives are as follows.

Run the given sample code and try to answer the questions under the exercise tag.

Follow the Exercises labelled from MNIST_V1.0.0 - MNIST_V1.6.0

For this assignment all you have to do is just answer all the questions. You can use a single column format for this document. Submissions are in pdf format only.

14.6 - Assignment 6

Assignment 6

In the sixth assignment, you will be working on (see @sec:534-week7) lecture videos. Objectives are as follows.

- Summarize what you have understood. (1 page)

- Select a subtopic that you are interested in and research on the current trends (0.5 page)

- Suggest ideas that could improve the existing work (imaginations and possibilities) (0.5 page)

- Summarize a specific video segment in the video lectures. To do this you need to follow these guidelines. Mention the video lecture name and section identification number. And also specify which range of minutes you have focused on the specific video lecture (2 pages).

- Pick a sport you like and show case how it can be used with Big Data in order to improve the game (1 page). Use techniques used in the lecture videos and mention which lecture video refers to this technique.

For this assignment we expect a 5-page document. You can use a single column format for this document. Make sure you write exactly 5pages. For your research section make sure you add citations to the sections that you are going to refer. If you have issues in how to do citations you can reach a TA to learn how to do that. We will try to include some chapters on how to do this in our handbook. Submissions are in pdf format only.

14.7 - Assignment 7

Assignment 7

For a Complete Project

This project must contain the following details;

- The idea of the project,

Doesn’t need to be a novel idea. But a novel idea will carry more weight towards a very higher grade. If you’re trying to replicate an existing idea. Need to provide the original source you’re referring. If it is a github project, need to reference it and showcase what you have done to improve it or what changes you made in applying the same idea to solve a different problem.

a). For a deep learning project, if you are using an existing model, you need to explain how did you use the same model to solve the problem suggested by you. b). If you planned to improve the existing model, explain the suggested improvements. c). If you are just using an existing model and solving an existing problem, you need to do an extensive benchmark. This kind of project carries lesser marks than a project like a) or b)

- Benchmark

No need to use a very large dataset. You can use the Google Colab and train your network with a smaller dataset. Think of a smaller dataset like MNIST. UCI Machine Learning Repository is a very good place to find such a dataset. https://archive.ics.uci.edu/ml/index.php (Links to an external site.)